Gemini Mutlimodel embedding model

Google launch Gemini Embedding 2 - a natively multi model embedding model. Embedding models are being used more and more for agent memory, and agentic assistants like OpenClaw need to remember more than just text. That’s where native multimodel embedding models come in.

A2A Protocol hits v1.0 milestone

A2A (Agent-to-Agent) v1.0 released this week. A2A is an open protocol that enables AI agents to discover each other’s capabilities, communicate, and delegate tasks across different technology stacks and organizational boundaries, solving the interoperability problem in multi-agent systems. It’s complementary to MCP rather than a replacement: MCP handles tool/context integration within an agent, while A2A handles communication between agents.

Nanoclaw partners with Docker

Docker partners with Nanoclaw to run the agent inside a disposable, MicroVM-based Docker Sandbox. It “enforces strong operating system level isolation. Combined with NanoClaw’s minimal attack surface and fully auditable open-source codebase, the stack is purpose-built to meet enterprise security standards from day one.”

Chrome 146 MCP updates

Chrome 146 release includes DevTools MCP Server v0.19 update:

- Integrated Lighthouse audits: You can now run Lighthouse audits directly through MCP, enabling automated performance and quality checks within your agentic workflows.

- Slim Mode: A new —slim mode is now available, designed to optimize tool descriptions and parameters for maximum token savings.

- New Debugging Skills: Added dedicated skills specifically for auditing and debugging accessibility, as well as debugging and optimizing Largest Contentful Paint (LCP).

- Expanded Tooling and Capabilities: The server now supports storage-isolated browser contexts, experimental screencast recording, a new take_memory_snapshot tool, and advanced interaction capabilities like type_text.

MCP for Android

AppFunctions lets Android apps expose capabilities directly to AI agents.

Cloudflare /crawl endpoint

With cloudflare’s /crawl endpoint you can initiate a crawl job with just a URL, and you’ll get a job ID back. Poll, or wait for results. Target use cases are to obtain data for model training, prompt augmentation in RAG systems, and researching/monitoring content.

Claude code updates

/btw - Have a side conversation with the LLM when another task is running

Applied + Research

One day, frontier AI research used to be done by meat computers in between eating, sleeping, having other fun, and synchronizing once in a while using sound wave interconnect in the ritual of “group meeting”. That era is long gone. Research is now entirely the domain of autonomous swarms of AI agents running across compute cluster megastructures in the skies. The agents claim that we are now in the 10,205th generation of the code base, in any case no one could tell if that’s right or wrong as the “code” is now a self-modifying binary that has grown beyond human comprehension. This repo is the story of how it all began. -@karpathy, March 2026.

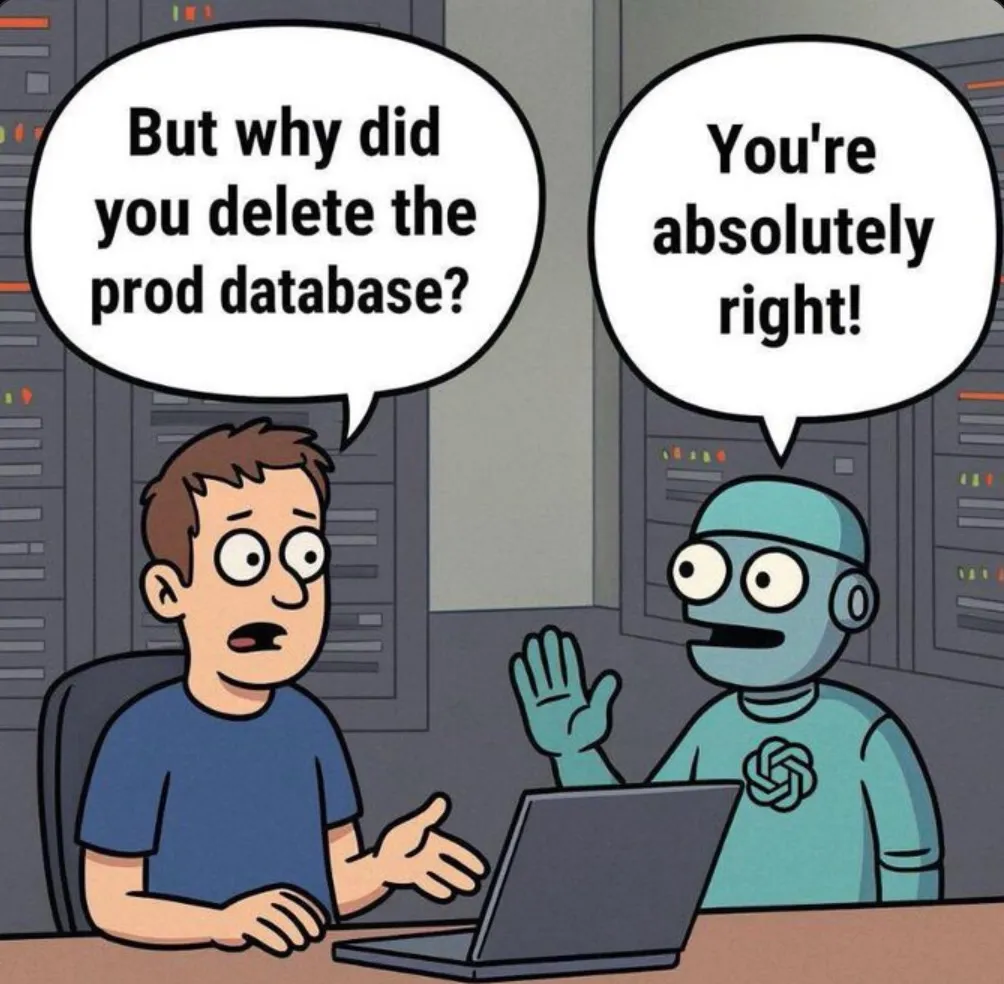

Amazon’s AI use caused outage

Reported in the Financial Times The briefing note describes a trend of incidents with “high blast radius” caused by “Gen-AI assisted changes” for which “best practices and safeguards are not yet fully established.” AWS spent 13 hours recovering after its own AI coding tool, asked to make some changes, decided instead to delete and recreate the environment.

Junior and mid-level engineers can no longer push AI-assisted code without a senior signing off.